Lecture 14 - (26/03/2026)

Today’s Topics:

PCA Dimensionality Reduction

PCA Handwritten Digits

PCA Noise Filtering

PCA Eigenfaces

PCA Eigenvoices

PCA as dimensionality reduction¶

Last time we introduced PCA as a way to find vectors that represent the principal axes of the data, where the length of the vector is an indication of how “important” that axis is in describing the distribution of the data.

Using PCA for dimensionality reduction involves zeroing out one or more of the smallest principal components, resulting in a lower-dimensional projection of the data that preserves the maximal data variance.

Here is an example of using PCA as a dimensionality reduction transform:

from sklearn.decomposition import PCA

import numpy as np

import pandas as pd

import seaborn as sns

import plotly.express as px

import plotly.graph_objects as go

from plotly.subplots import make_subplots

sns.set_style('white')Source

rng = np.random.RandomState(1)

X = np.dot(rng.rand(2, 2), rng.randn(2, 200)).T

fig = px.scatter(

x=X[:, 0],

y=X[:, 1],

labels={"x": "X1", "y": "X2"}

)

fig.update_layout(

yaxis_scaleanchor="x",

)

fig.show()pca = PCA(n_components=1)

pca.fit(X)

X_pca = pca.transform(X)

print("original shape: ", X.shape)

print("transformed shape:", X_pca.shape)original shape: (200, 2)

transformed shape: (200, 1)

The transformed data has been reduced to a single dimension. To understand the effect of this dimensionality reduction, we can perform the inverse transform of this reduced data and plot it along with the original data:

X_new = pca.inverse_transform(X_pca)Source

fig = go.Figure()

fig.add_trace(go.Scatter(

x=X[:, 0],

y=X[:, 1],

mode='markers',

marker=dict(opacity=0.2),

name='Original'

))

fig.add_trace(go.Scatter(

x=X_new[:, 0],

y=X_new[:, 1],

mode='markers',

marker=dict(opacity=0.8),

name='Reconstructed'

))

fig.update_layout(

xaxis_title="X1",

yaxis_title="X2",

yaxis_scaleanchor="x",

)

fig.show()The light blue points are the original data, while the red points are the projected version. This makes clear what a PCA dimensionality reduction does: the information along the least important principal axis or axes is removed, leaving only the component(s) of the data with the highest variance. The fraction of variance that is cut out (proportional to the spread of points about the line formed in this figure) is roughly a measure of how much “information” is discarded in this reduction of dimensionality.

This reduced-dimension dataset good enough to encode the most important relationships between the points: despite reducing the dimension of the data by 50%, the overall relationship between the data points are mostly preserved.

Let’s try a similar example in 3D:

from sklearn.datasets import make_blobs

X, y = make_blobs(n_samples=500, n_features=3, centers=3, cluster_std=1)Source

fig = go.Figure()

fig.add_trace(go.Scatter3d(

x=X[:, 0], y=X[:, 1], z=X[:, 2],

mode='markers',

marker=dict(size=4, opacity=0.5, color='blue'),

name='Original'

))

fig.show()To project it down to 2D:

pca2 = PCA(n_components=2)

pca2.fit(X)

X_pca2 = pca.transform(X)

X_projected2 = pca.inverse_transform(X_pca2)---------------------------------------------------------------------------

ValueError Traceback (most recent call last)

Cell In[20], line 3

1 pca2 = PCA(n_components=2)

2 pca2.fit(X)

----> 3 X_pca2 = pca.transform(X)

4 X_projected2 = pca.inverse_transform(X_pca2)

File ~/git/data-science-sp26/.venv/lib/python3.14/site-packages/sklearn/utils/_set_output.py:316, in _wrap_method_output.<locals>.wrapped(self, X, *args, **kwargs)

314 @wraps(f)

315 def wrapped(self, X, *args, **kwargs):

--> 316 data_to_wrap = f(self, X, *args, **kwargs)

317 if isinstance(data_to_wrap, tuple):

318 # only wrap the first output for cross decomposition

319 return_tuple = (

320 _wrap_data_with_container(method, data_to_wrap[0], X, self),

321 *data_to_wrap[1:],

322 )

File ~/git/data-science-sp26/.venv/lib/python3.14/site-packages/sklearn/decomposition/_base.py:142, in _BasePCA.transform(self, X)

138 xp, _ = get_namespace(X, self.components_, self.explained_variance_)

140 check_is_fitted(self)

--> 142 X = validate_data(

143 self,

144 X,

145 dtype=[xp.float64, xp.float32],

146 accept_sparse=("csr", "csc"),

147 reset=False,

148 )

149 return self._transform(X, xp=xp, x_is_centered=False)

File ~/git/data-science-sp26/.venv/lib/python3.14/site-packages/sklearn/utils/validation.py:2923, in validate_data(_estimator, X, y, reset, validate_separately, skip_check_array, **check_params)

2920 out = X, y

2922 if not no_val_X and check_params.get("ensure_2d", True):

-> 2923 _check_n_features(_estimator, X, reset=reset)

2925 return out

File ~/git/data-science-sp26/.venv/lib/python3.14/site-packages/sklearn/utils/validation.py:2787, in _check_n_features(estimator, X, reset)

2784 return

2786 if n_features != estimator.n_features_in_:

-> 2787 raise ValueError(

2788 f"X has {n_features} features, but {estimator.__class__.__name__} "

2789 f"is expecting {estimator.n_features_in_} features as input."

2790 )

ValueError: X has 3 features, but PCA is expecting 2 features as input.Source

fig = go.Figure()

fig.add_trace(go.Scatter3d(

x=X[:, 0], y=X[:, 1], z=X[:, 2],

mode='markers',

marker=dict(size=4, opacity=0.2, color='blue'),

name='Original'

))

fig.add_trace(go.Scatter3d(

x=X_projected2[:, 0], y=X_projected2[:, 1], z=X_projected2[:, 2],

mode='markers',

marker=dict(size=4, opacity=0.8, color='red'),

name='Projected'

))

for i, vec in enumerate(pca2.components_):

fig.add_trace(go.Scatter3d(

x=[pca2.mean_[0], pca2.mean_[0] + vec[0]*5],

y=[pca2.mean_[1], pca2.mean_[1] + vec[1]*5],

z=[pca2.mean_[2], pca2.mean_[2] + vec[2]*5],

mode='lines+markers',

marker=dict(size=2, color='black'),

line=dict(width=4),

name=f'PC{i+1}'

))

fig.show()---------------------------------------------------------------------------

NameError Traceback (most recent call last)

Cell In[21], line 11

1 fig = go.Figure()

3 fig.add_trace(go.Scatter3d(

4 x=X[:, 0], y=X[:, 1], z=X[:, 2],

5 mode='markers',

6 marker=dict(size=4, opacity=0.2, color='blue'),

7 name='Original'

8 ))

10 fig.add_trace(go.Scatter3d(

---> 11 x=X_projected2[:, 0], y=X_projected2[:, 1], z=X_projected2[:, 2],

12 mode='markers',

13 marker=dict(size=4, opacity=0.8, color='red'),

14 name='Projected'

15 ))

17 for i, vec in enumerate(pca2.components_):

18 fig.add_trace(go.Scatter3d(

19 x=[pca2.mean_[0], pca2.mean_[0] + vec[0]*5],

20 y=[pca2.mean_[1], pca2.mean_[1] + vec[1]*5],

(...) 25 name=f'PC{i+1}'

26 ))

NameError: name 'X_projected2' is not definedBut this begs the question, can we reduce it further down to 1 dimension?

pca1 = PCA(n_components=1)

pca1.fit(X)

X_pca1 = pca1.transform(X)

X_projected1 = pca1.inverse_transform(X_pca)Source

fig = go.Figure()

fig.add_trace(go.Scatter3d(

x=X[:, 0], y=X[:, 1], z=X[:, 2],

mode='markers',

marker=dict(size=4, opacity=0.2, color='blue'),

name='Original'

))

fig.add_trace(go.Scatter3d(

x=X_projected1[:, 0], y=X_projected1[:, 1], z=X_projected1[:, 2],

mode='markers',

marker=dict(size=4, opacity=0.8, color='red'),

name='Projected'

))

for i, vec in enumerate(pca1.components_):

fig.add_trace(go.Scatter3d(

x=[pca1.mean_[0], pca1.mean_[0] + vec[0]*5],

y=[pca1.mean_[1], pca1.mean_[1] + vec[1]*5],

z=[pca1.mean_[2], pca1.mean_[2] + vec[2]*5],

mode='lines+markers',

marker=dict(size=2, color='black'),

line=dict(width=4),

name=f'PC{i+1}'

))

fig.show()We can see that 1D alone captures the seperation between the blobs, but 2D allows us to see that these blobs are spherical in shape.

Source

fig = go.Figure()

fig.add_trace(go.Scatter(

x=X_projected1[:, 0],

y=X_projected1[:, 1],

mode='markers',

marker=dict(size=6, color='blue', opacity=0.6),

name='1D PCA Reconstruction'

))

fig.add_trace(go.Scatter(

x=X_projected2[:, 0],

y=X_projected2[:, 1],

mode='markers',

marker=dict(size=6, color='red', opacity=0.8),

name='2D PCA Reconstruction'

))

fig.show()Notice that as the dimensions go down the density of points along a axis increase, this fundemental concept is called the curse of dimensionality.

In low-dimensional spaces, it’s relatively easy to visualise data, measure distances, and identify patterns. But as the number of dimensions increases:

Data becomes sparse (much more spread out)

Distance metrics lose meaning

Increased computational cost

PCA Handwritten Digits¶

The usefulness of the dimensionality reduction may not be entirely apparent in only two dimensions, but becomes much more clear when looking at high-dimensional data. To see this, let’s take a quick look at the application of PCA to the MNIST example we saw back in Logistic Classification.

from sklearn.datasets import load_digits

digits = load_digits()

digits.data.shape(1797, 64)Recall that the data consists of 8×8 pixel images, meaning that they are 64-dimensional. To gain some intuition into the relationships between these points, we can use PCA to project them to a more manageable number of dimensions, say two:

pca = PCA(2) # project from 64 to 2 dimensions

projected = pca.fit_transform(digits.data)

print(digits.data.shape)

print(projected.shape)(1797, 64)

(1797, 2)

We can now plot the first two principal components of each point to learn about the data:

Source

scatter = go.Scatter(

x=projected[:, 0],

y=projected[:, 1],

mode='markers',

marker=dict(

color=digits.target,

colorscale='Viridis',

size=6,

opacity=0.7

),

customdata=np.arange(len(digits.data)), # store index

)

# Initial image

img = digits.data[0].reshape(8, 8)

heatmap = go.Heatmap(

z=img,

colorscale='gray',

showscale=False

)

# Subplots

from plotly.subplots import make_subplots

fig = make_subplots(

rows=1, cols=2,

column_widths=[0.4, 0.6],

specs=[[{"type": "heatmap"}, {"type": "scatter"}]]

)

fig.add_trace(heatmap, row=1, col=1)

fig.add_trace(scatter, row=1, col=2)

fig.update_layout(

title="Click a point to view digit",

height=500

)

fig.write_html(

"interactive_digits.html",

include_plotlyjs='cdn',

post_script="""

const plot = document.querySelectorAll('.plotly-graph-div')[0];

plot.on('plotly_click', function(data) {

const idx = data.points[0].customdata;

const digits = %s;

const img = digits[idx];

const update = {

z: [img]

};

Plotly.restyle(plot, update, [0]);

});

""" % digits.data.reshape(-1, 8, 8).tolist()

)

fig---------------------------------------------------------------------------

NameError Traceback (most recent call last)

Cell In[1], line 1

----> 1 scatter = go.Scatter(

2 x=projected[:, 0],

3 y=projected[:, 1],

4 mode='markers',

5 marker=dict(

6 color=digits.target,

7 colorscale='Viridis',

8 size=6,

9 opacity=0.7

10 ),

11 customdata=np.arange(len(digits.data)), # store index

12 )

14 # Initial image

15 img = digits.data[0].reshape(8, 8)

NameError: name 'go' is not definedRecall what these components mean: the full data is a 64-dimensional point cloud, and these points are the projection of each data point along the directions with the largest variance. Essentially, we have found the optimal stretch and rotation in 64-dimensional space that allows us to see the layout of the digits in two dimensions, and have done this in an unsupervised manner—that is, without reference to the labels.

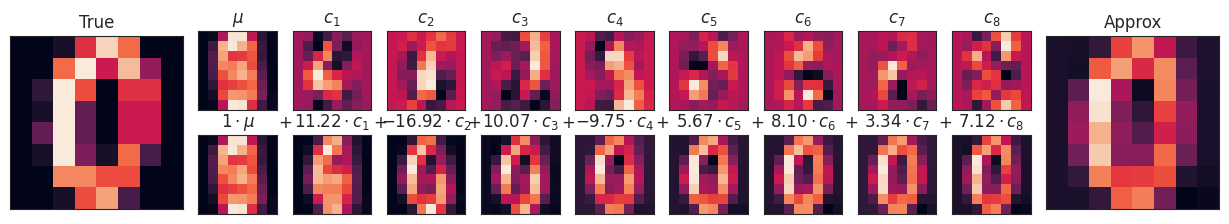

We can go a bit further here, and begin to ask what the reduced dimensions really mean? This meaning can be understood in terms of combinations of basis vectors. For example, each image in the training set is defined by a collection of 64 pixel values, which we will call the vector x.

One way we can think about this is in terms of a pixel basis. That is, to construct the image, we multiply each element of the vector by the pixel it describes, and then add the results together to build the image:

One way we might imagine reducing the dimension of this data is to zero out all but a few of these basis vectors. For example, if we use only the first eight pixels, we get an eight-dimensional projection of the data, but it is not very reflective of the whole image: we’ve thrown out nearly 90% of the pixels!!!

Source

def plot_pca_components(x, coefficients=None, mean=0, components=None,

imshape=(8, 8), n_components=8, fontsize=12,

show_mean=True):

if coefficients is None:

coefficients = x

if components is None:

components = np.eye(len(coefficients), len(x))

mean = np.zeros_like(x) + mean

fig = plt.figure(figsize=(1.2 * (5 + n_components), 1.2 * 2))

g = plt.GridSpec(2, 4 + bool(show_mean) + n_components, hspace=0.3)

def show(i, j, x, title=None):

ax = fig.add_subplot(g[i, j], xticks=[], yticks=[])

ax.imshow(x.reshape(imshape), interpolation='nearest')

if title:

ax.set_title(title, fontsize=fontsize)

show(slice(2), slice(2), x, "True")

approx = mean.copy()

counter = 2

if show_mean:

show(0, 2, np.zeros_like(x) + mean, r'$\mu$')

show(1, 2, approx, r'$1 \cdot \mu$')

counter += 1

for i in range(n_components):

approx = approx + coefficients[i] * components[i]

show(0, i + counter, components[i], r'$c_{0}$'.format(i + 1))

show(1, i + counter, approx,

r"${0:.2f} \cdot c_{1}$".format(coefficients[i], i + 1))

if show_mean or i > 0:

plt.gca().text(0, 1.05, '$+$', ha='right', va='bottom',

transform=plt.gca().transAxes, fontsize=fontsize)

show(slice(2), slice(-2, None), approx, "Approx")

return figdigits = load_digits()

fig = plot_pca_components(digits.data[10],

show_mean=False)---------------------------------------------------------------------------

NameError Traceback (most recent call last)

Cell In[12], line 1

----> 1 digits = load_digits()

3 fig = plot_pca_components(digits.data[10],

4 show_mean=False)

NameError: name 'load_digits' is not definedThe upper row of panels shows the individual pixels, and the lower row shows the cumulative contribution of these pixels to the construction of the image. Using only eight of the pixel-basis components, we can only construct a small portion of the 64-pixel image. Were we to continue this sequence and use all 64 pixels, we would recover the original image.

But the pixel-wise representation is not the only choice of basis. We can also use other basis functions, which each contain some pre-defined contribution from each pixel, and write something like

PCA can be thought of as a process of choosing optimal basis functions, such that adding together just the first few of them is enough to suitably reconstruct the bulk of the elements in the dataset. The principal components, which act as the low-dimensional representation of our data, are simply the coefficients that multiply each of the elements in this series. This figure shows a similar depiction of reconstructing this digit using the mean plus the first eight PCA basis functions:

pca = PCA(n_components=8)

Xproj = pca.fit_transform(digits.data)

fig = plot_pca_components(digits.data[10], Xproj[10],

pca.mean_, pca.components_)

Unlike the pixel basis, the PCA basis allows us to recover the key features of the input image with just a mean plus eight components! The amount of each pixel in each component is the corollary of the orientation of the vector in our two-dimensional example. This is the sense in which PCA provides a low-dimensional representation of the data: it discovers a set of basis functions that are more efficient than the native pixel-basis of the input data.

Choosing the number of components¶

A vital part of using PCA in practice is the ability to estimate how many components are needed to describe the data. This can be determined by looking at the cumulative explained variance ratio as a function of the number of components:

pca = PCA().fit(digits.data)

cumulative_variance = np.cumsum(pca.explained_variance_ratio_)pca.explained_variance_ratio_array([0.148906, 0.136188, 0.117946, 0.0841 , 0.057824, 0.049169,

0.04316 , 0.036614, 0.033532, 0.030788, 0.023723, 0.022727,

0.018219, 0.017739, 0.014671, 0.014097, 0.013186, 0.012481,

0.010177, 0.009056, 0.008895, 0.007971, 0.007675, 0.007229,

0.006959, 0.005961, 0.005756, 0.005152, 0.004895, 0.004289,

0.003736, 0.003533, 0.003367, 0.00328 , 0.003083, 0.002938,

0.002566, 0.002277, 0.002223, 0.002114, 0.001899, 0.001587,

0.001512, 0.001406, 0.001166, 0.001075, 0.000964, 0.000775,

0.000557, 0.000404, 0.00021 , 0.000082, 0.000053, 0.000051,

0.000033, 0.000012, 0.000007, 0.000003, 0.000001, 0.000001,

0. , 0. , 0. , 0. ])fig = px.line(cumulative_variance)

fig.update_layout(

xaxis_title='Number of Components',

yaxis_title='Cumulative Explained Variance',

yaxis=dict(range=[0, 1])

)

fig.show()This curve quantifies how much of the total, 64-dimensional variance is contained within the first N components. For example, we see that with the digits the first 10 components contain approximately 75% of the variance, while you need around 50 components to describe close to 100% of the variance.

Here we see that our two-dimensional projection loses a lot of information (as measured by the explained variance) and that we’d need about 20 components to retain 90% of the variance. Looking at this plot for a high-dimensional dataset can help you understand the level of redundancy present in multiple observations.

PCA as Noise Filtering¶

PCA can also be used as a filtering approach for noisy data. The idea is this: any components with variance much larger than the effect of the noise should be relatively unaffected by the noise. So if you reconstruct the data using just the largest subset of principal components, you should be preferentially keeping the signal and throwing out the noise.

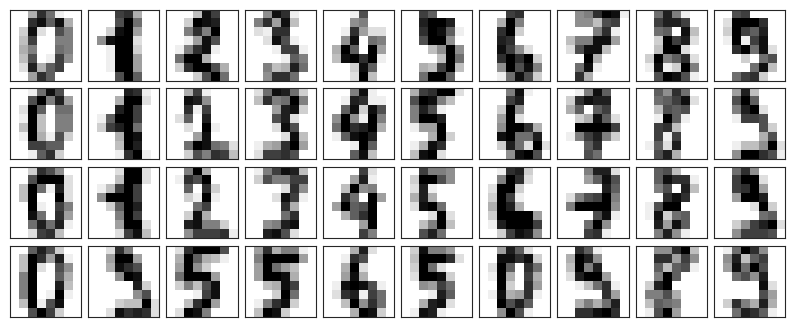

Let’s see how this looks with the digits data. First we will plot several of the input noise-free data:

def plot_digits(data):

fig, axes = plt.subplots(4, 10, figsize=(10, 4),

subplot_kw={'xticks':[], 'yticks':[]},

gridspec_kw=dict(hspace=0.1, wspace=0.1))

for i, ax in enumerate(axes.flat):

ax.imshow(data[i].reshape(8, 8),

cmap='binary', interpolation='nearest',

clim=(0, 16))plot_digits(digits.data)

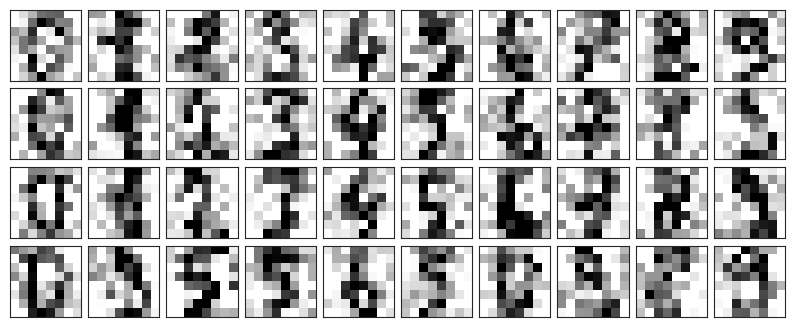

Now lets add some random noise to create a noisy dataset, and re-plot it:

noisy = np.random.normal(digits.data, 4)

plot_digits(noisy)

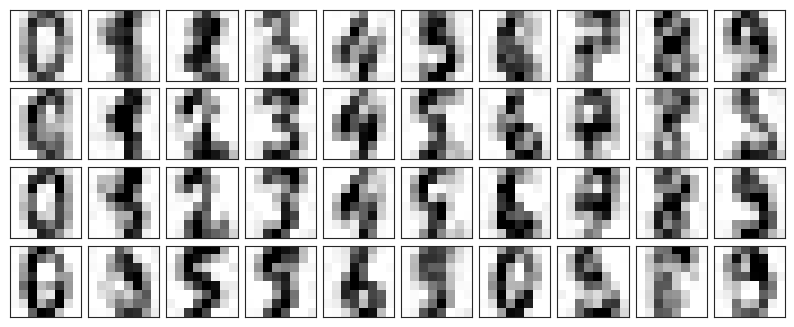

It’s clear by eye that the images are noisy, and contain random pixels. Let’s train a PCA on the noisy data, requesting that the projection preserve 50% of the variance:

pca = PCA(0.50).fit(noisy)

pca.n_components_np.int64(12)Here 50% of the variance amounts to 12 principal components. Now we compute these components, and then use the inverse of the transform to reconstruct the filtered digits:

components = pca.transform(noisy)

filtered = pca.inverse_transform(components)

plot_digits(filtered)

This signal preserving/noise filtering property makes PCA a very useful feature selection routine—for example, rather than training a classifier on very high-dimensional data, you might instead train the classifier on the lower-dimensional representation, which will automatically serve to filter out random noise in the inputs.

PCA for Facial Data¶

Let’s take a look at the facial recognition problem. We will use the Labeled Faces in the Wild dataset, which consists of several thousand collated photos of various public figures. A fetcher for the dataset is built into Scikit-Learn:

from sklearn.datasets import fetch_lfw_people

faces = fetch_lfw_people(min_faces_per_person=60)

print(faces.target_names)

print(faces.images.shape)['Ariel Sharon' 'Colin Powell' 'Donald Rumsfeld' 'George W Bush'

'Gerhard Schroeder' 'Hugo Chavez' 'Junichiro Koizumi' 'Tony Blair']

(1348, 62, 47)

Let’s take a look at the principal axes that span this dataset. Because this is a large dataset, we will use RandomizedPCA as it contains a randomized method to approximate the first N principal components much more quickly than the standard PCA estimator, and thus is very useful for high-dimensional data (here, a dimensionality of nearly 3K). We will take a look at the first 150 components:

pca = PCA(150, svd_solver='randomized')

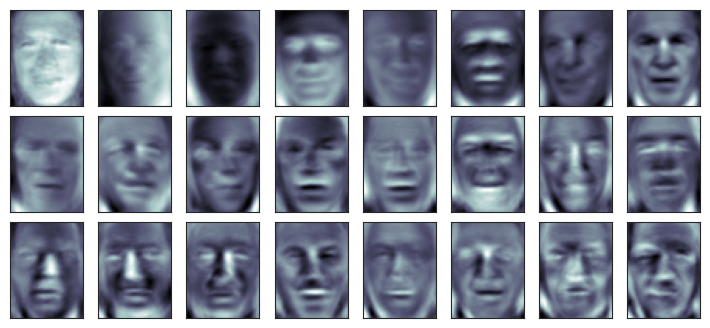

pca.fit(faces.data)In this case, it can be interesting to visualize the images associated with the first several principal components (these components are technically known as “eigenvectors,” so these types of images are often called “eigenfaces”, and any further field where you analyse these will be eigen-whatever). As you can see in this figure, they are as creepy as they sound:

fig, axes = plt.subplots(3, 8, figsize=(9, 4),

subplot_kw={'xticks':[], 'yticks':[]},

gridspec_kw=dict(hspace=0.1, wspace=0.1))

for i, ax in enumerate(axes.flat):

ax.imshow(pca.components_[i].reshape(62, 47), cmap='bone')

The results are very interesting, and give us insight into how the images vary: for example, the first few eigenfaces (from the top left) seem to be associated with the angle of lighting on the face, and later principal vectors seem to be picking out certain features, such as eyes, noses, and lips. Let’s take a look at the cumulative variance of these components to see how much of the data information the projection is preserving:

cumulative_variance = np.cumsum(pca.explained_variance_ratio_)

fig = px.line(cumulative_variance)

fig.update_layout(

xaxis_title='Number of Components',

yaxis_title='Cumulative Explained Variance',

yaxis=dict(range=[0, 1])

)

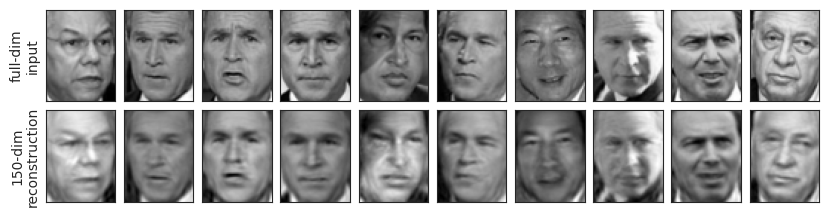

fig.show()We see that these 150 components account for just over 90% of the variance. That would lead us to believe that using these 150 components, we would recover most of the essential characteristics of the data. To make this more concrete, we can compare the input images with the images reconstructed from these 150 components:

ca = PCA(150, svd_solver='randomized').fit(faces.data)

components = pca.transform(faces.data)

projected = pca.inverse_transform(components)fig, ax = plt.subplots(2, 10, figsize=(10, 2.5),

subplot_kw={'xticks':[], 'yticks':[]},

gridspec_kw=dict(hspace=0.1, wspace=0.1))

for i in range(10):

ax[0, i].imshow(faces.data[i].reshape(62, 47), cmap='binary_r')

ax[1, i].imshow(projected[i].reshape(62, 47), cmap='binary_r')

ax[0, 0].set_ylabel('full-dim\ninput')

ax[1, 0].set_ylabel('150-dim\nreconstruction');

The top row here shows the input images, while the bottom row shows the reconstruction of the images from just 150 of the ~3000 initial features. What this means is that our classification algorithm can be trained on 150-dimensional data rather than 3000-dimensional data, which depending on the particular algorithm we choose, can lead to a much more efficient classification!

PCA for Vocal Decomposition¶

url = "https://raw.githubusercontent.com/jim-schwoebel/sample_voice_data/master/gender_all.csv"

df = pd.read_csv(url)

mfcc_cols = [c for c in df.columns if c.startswith("mfcc_")]

X_mfcc = df[mfcc_cols].values

y = df['class_'].values

print("MFCC feature matrix shape:", X_mfcc.shape)MFCC feature matrix shape: (191, 65)

from sklearn.decomposition import PCA

pca = PCA(n_components=2)

X_pca = pca.fit_transform(X_mfcc)

print("Explained variance ratio (first 2 PCs):", pca.explained_variance_ratio_)Explained variance ratio (first 2 PCs): [0.629214 0.210381]

df_plot = pd.DataFrame({

"PC1": X_pca[:, 0],

"PC2": X_pca[:, 1],

"label": y

})

fig = px.scatter(df_plot, x="PC1", y="PC2", color="label",

title="Eigenvoices PCA (MFCC only)")

fig.show()import os

import librosa.feature

import librosa.display

def load_wavs_to_mfcc(folder, sr=16000, n_mfcc=13, max_len=200):

data = []

for file in os.listdir(folder):

if file.endswith(".wav"):

path = os.path.join(folder, file)

y, _ = librosa.load(path, sr=sr)

mfcc = librosa.feature.mfcc(y=y, sr=sr, n_mfcc=n_mfcc)

if mfcc.shape[1] < max_len:

pad_width = max_len - mfcc.shape[1]

mfcc = np.pad(mfcc, ((0,0),(0,pad_width)))

else:

mfcc = mfcc[:, :max_len]

data.append(mfcc.flatten())

return np.array(data)

def mfcc_to_audio(mfcc_flat, sr=16000, n_mfcc=13, max_len=200):

mfcc = mfcc_flat.reshape(n_mfcc, max_len)

mel_spec = librosa.feature.inverse.mfcc_to_mel(mfcc)

audio = librosa.feature.inverse.mel_to_audio(mel_spec, sr=sr)

return audiofrom sklearn.decomposition import PCA

X = load_wavs_to_mfcc("../attachments/voices")

mean = X.mean(axis=0)

X_centered = X - mean

pca = PCA(0.9)

X_pca = pca.fit_transform(X_centered)for p in pca.components_:

print(p)[ 0.033966 0.029549 0.031811 ... -0.001002 -0.000166 0.000428]

[-0.055923 -0.058963 -0.04884 ... -0.00046 0.0007 0.001205]

[-0.036021 -0.027198 -0.024226 ... -0.001305 0.002365 0.00391 ]

[ 0.096355 0.107422 0.128197 ... 0.00179 -0.002227 -0.004043]

[ 0.010624 0.020735 0.058414 ... 0.000163 -0.000063 -0.000186]

[-0.028806 -0.03032 -0.04286 ... -0.000315 0.000864 0.001325]

def play_eigenvoice(component_idx, scale=2.0):

vec = mean + scale * pca.components_[component_idx]

audio = mfcc_to_audio(vec)

return Audio(audio, rate=16000)print(pca.components_[0])

play_eigenvoice(0)[ 0.033966 0.029549 0.031811 ... -0.001002 -0.000166 0.000428]